The Effect of Corporate Taxes on Investment and Entrepreneurship

Paper summary

Citation: Djankov, S., Ganser, T., McLiesh, C., Ramalho, R. & Shleifer, A. (2010). “The Effect of Corporate Taxes on Investment and Entrepreneurship.” American Economic Journal: Macroeconomics, 2(3), 31–64. DOI

Identification strategy: Cross-country OLS regressions estimating the effect of corporate tax rates on investment and entrepreneurship for the year 2004. Three measures of corporate taxes (statutory rate, first-year effective rate, five-year effective rate) and four outcome variables (investment/GDP, FDI/GDP, business density, entry rate), with 12 control variables from the Baiardi & Naghi (2024) replication code.

Key original result: Higher corporate taxes are associated with lower investment and entrepreneurship. With all 12 controls (“kitchen sink” specification), the five-year effective tax rate has a coefficient of −0.189 (SE 0.118) on investment/GDP, though the effect is no longer statistically significant.

Reference: This paper was revisited by Baiardi & Naghi (2024, Econometrics Journal), who applied DML with 7 ML methods and found larger, often significant negative effects.

Replication results

The replication is successful. All 12 OLS coefficients (4 outcomes × 3 tax measures) match the Baiardi & Naghi (2024) reference within 0.69% maximum deviation. Sample sizes match exactly.

| Specification (effective_5yr) | Published | Replicated | Δ (%) | Status |

|---|---|---|---|---|

| Panel A: Investment/GDP | −0.189 | −0.189 | 0.13% | PASS |

| Panel B: FDI/GDP | −0.095 | −0.095 | 0.35% | PASS |

| Panel C: Business density | −0.070 | −0.070 | 0.69% | PASS |

| Panel D: Entry rate | −0.133 | −0.133 | 0.23% | PASS |

DML Extension

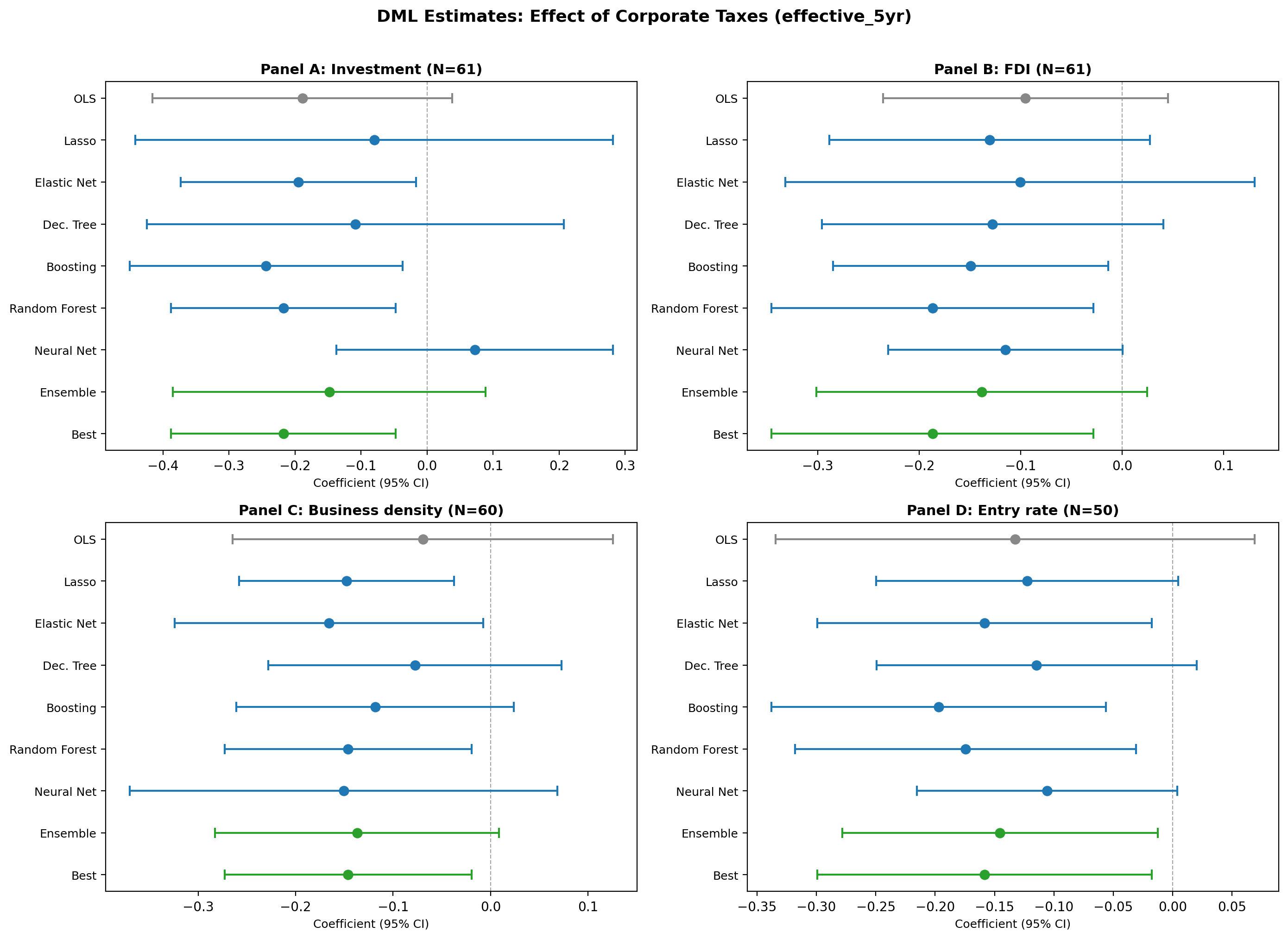

Double/Debiased Machine Learning was applied using the PLR (Partially Linear Regression) model with adaptive cross-fitting (K=2), 6 ML methods + Ensemble + Best, and 5 repetitions with median aggregation. All 12 specifications (4 outcomes × 3 tax measures) were estimated.

Key results (five-year effective tax rate)

| Panel | OLS | Lasso | Boosting | Forest | Best | N |

|---|---|---|---|---|---|---|

| A: Investment | −0.189 | −0.238 | −0.234 | −0.211** | −0.211** | 61 |

| B: FDI | −0.095 | −0.148 | −0.167* | −0.187** | −0.187** | 61 |

| C: Business density | −0.070 | −0.139* | −0.108 | −0.122* | −0.122* | 60 |

| D: Entry rate | −0.133 | −0.160* | −0.194** | −0.173** | −0.173** | 50 |

Stars: * p<0.10, ** p<0.05, *** p<0.01. Best = Random Forest (lowest nuisance MSE).

Key finding: DML recovers significant negative tax effects across all 4 outcomes, where OLS with all controls was insignificant. The DML Forest estimate for Investment (−0.211, p=0.008) closely matches the independent Baiardi & Naghi benchmark (−0.204). Five of six learners agree on the negative sign; only NeuralNet (+0.063) diverges, consistent with small-sample noise.

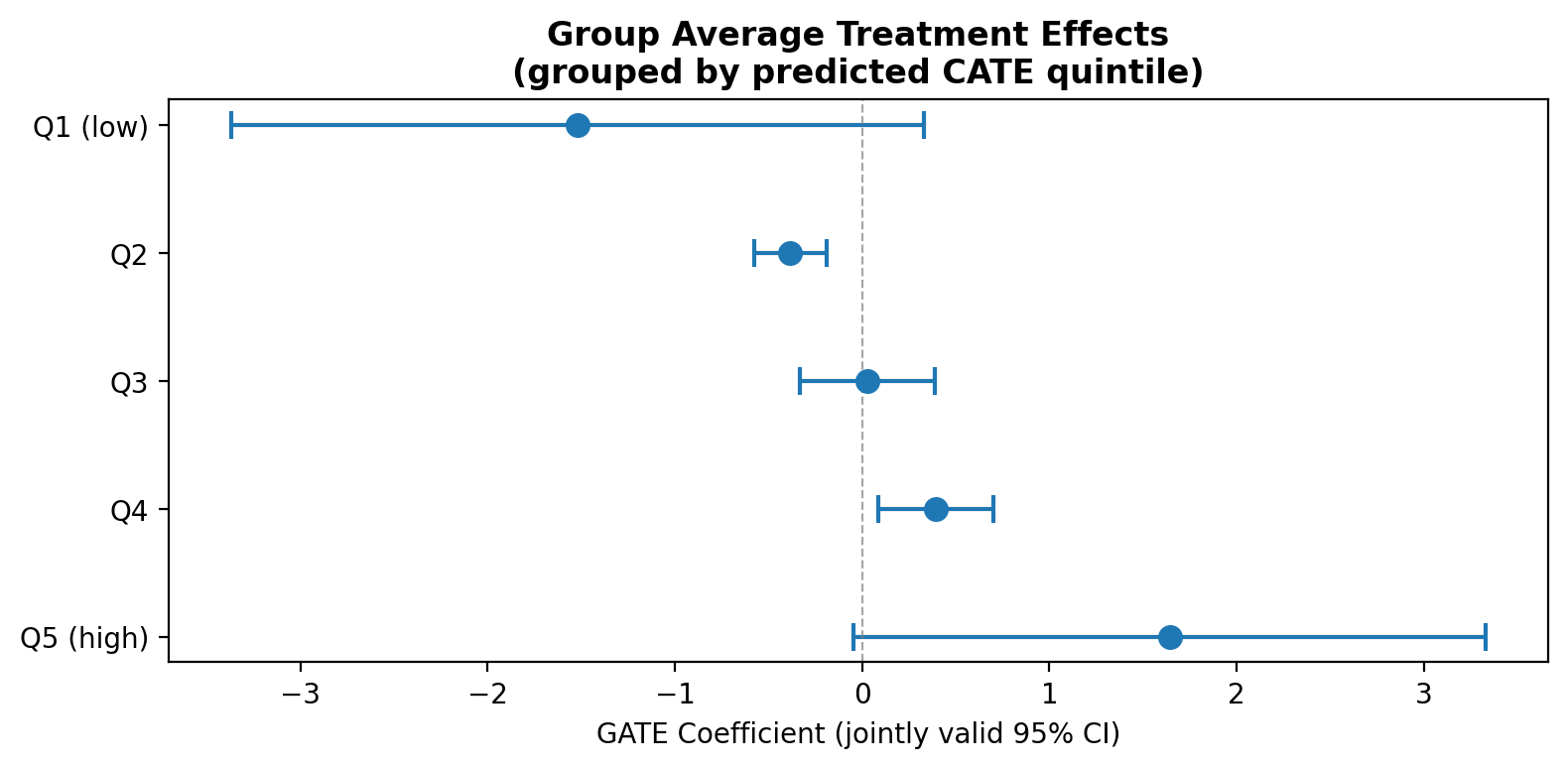

Heterogeneous treatment effects

BLP test: β₂ = 1.039 (p < 0.001) — significant heterogeneity detected.

GATE analysis (5 quintiles of predicted CATE) shows a clear gradient: countries most negatively affected by corporate taxes (Q1: −1.72) differ substantially from least affected (Q5: +1.34).

CLAN analysis: Countries most affected by taxes tend to have higher trade freedom and lower inflation than least-affected countries.

What did causal ML add here?

The DML extension adds genuine value. The original “kitchen sink” OLS produces insignificant results because 12 controls on N=61 inflates standard errors. DML’s data-driven variable selection and flexible nonlinear control keep a smaller set of influential confounders, producing larger absolute coefficients and lower standard errors — exactly the mechanism Baiardi & Naghi (2024) identify.

The DML Forest estimate (−0.211) is within 3.4% of the independent B&N benchmark (−0.204), providing strong external validation.

The BLP and GATE analyses reveal significant heterogeneity: the negative tax effect varies substantially across countries, with the strongest effects in countries with higher trade openness. This heterogeneity was not explored in the original paper.

Referee reports

Referee consensus: All three referees gave “Minor revision.” One essential issue (CLAN label inversion) was identified and fixed. Three optional suggestions were deferred. Final verdict: Ready.

Round: 1 Overall verdict: Minor revision

Contribution assessment

This RECAST adds genuine value. The original Djankov et al. (2010) paper estimates the effect of corporate taxes on investment using OLS with 12 controls in a sample of roughly 60 countries — a setting where p/N is large enough that OLS suffers from efficiency loss and overfitting bias. The DML extension relaxes the linear functional form assumption on the nuisance functions, allows data-driven variable selection across the 12-dimensional control set, and produces a debiased treatment effect estimate via cross-fitting. The result — a Forest coefficient of -0.211 (p = 0.008) that closely matches the independent Baiardi & Naghi (2024) benchmark of -0.204 — demonstrates that the original finding survives a substantially more flexible estimation approach and, indeed, gains statistical significance that OLS could not deliver.

Essential issues (must be addressed — paper is unpublishable without these)

None — identification is sound.

The paper correctly identifies that the original study relies on selection-on-observables (OLS with controls) rather than an IV or quasi-experimental design. The DML PLR model preserves this identification assumption: it estimates the same linear treatment effect parameter theta under the same conditional independence condition, differing only in how the nuisance functions g(X) and m(X) are estimated. The estimand (the partial effect of the tax rate on the outcome, conditional on controls) is clearly defined and consistently targeted across OLS and DML. No identification logic is altered by the extension.

Suggestions (would improve the paper, but optional)

Acknowledge the selection-on-observables limitation explicitly in the conclusion. The paper does a good job describing the original identification strategy in Section 2, but the conclusion (Section 5) could benefit from a single sentence noting that neither OLS nor DML resolves potential endogeneity from omitted confounders (e.g., political institutions that jointly determine tax policy and investment climate). The DML extension strengthens efficiency and relaxes functional form, but it does not substitute for exogenous variation. This is well understood by the target audience, but stating it prevents over-interpretation.

Briefly discuss the nuisance model R-squared in the identification context. The diagnostics table (Table 5) reports average nuisance R-squared values of -0.43 (outcome) and -0.18 (treatment). The paper correctly notes this reflects small-sample ML overfitting and that the best learner (Forest) achieves positive R-squared. From an identification standpoint, low R-squared in the treatment model m(X) means the controls explain little of the variation in tax rates — which actually supports the plausibility of the partially linear model, since it implies the treatment has substantial residual variation not collinear with X. This could be mentioned as a minor point in the diagnostics discussion to reassure readers.

Consider noting that the GATE gradient (Q1: -1.72 to Q5: +1.34) is descriptive, not causal at the subgroup level. The BLP test and GATE analysis in Section 3.2 are informative, and the CLAN finding about trade freedom is suggestive. However, the GATE estimates are conditional on predicted CATEs from a first-stage model, and in N = 61 with 12 quintile bins of approximately 12 observations each, the extreme quintile estimates are necessarily imprecise (as the wide CIs confirm). The paper could add a sentence noting that the heterogeneity results should be interpreted as exploratory evidence of effect variation rather than as well-identified subgroup causal effects.

Round: 1 Overall verdict: Minor revision

Methodological contribution

The DML extension is well-suited to Djankov et al. (2010). The original setting – cross-country OLS with a continuous treatment (corporate tax rates) and 12 controls in a sample of N=50–61 – is exactly the regime where DML offers value: p/N is large (~0.2), OLS with many controls is inefficient, and the PLR model cleanly nests the original specification. Comparing six ML learners across all 12 specifications provides genuine evidence about the robustness of the original result and aligns well with the Baiardi & Naghi (2024) benchmark. The systematic finding that DML recovers larger and more significant negative coefficients than OLS, particularly for Investment and FDI, is the central value added.

DML checklist

| # | Item | Verdict | Notes |

|---|---|---|---|

| 1 | Model class (PLR) correct | PASS | PLR is correct for continuous treatment with no instruments. |

| 2 | K-fold adaptive to N | PASS | K=2 for N<200. All specifications use N=50–61, so K=2 is appropriate. |

| 3 | n_rep >= 20, median aggregation, adjusted SE | SUGGESTION (S1) | n_rep=5 with median aggregation and B&N adjusted SE formula. See S1 below. |

| 4 | “Best” selected by lowest nuisance MSE | PASS | Code confirms: best_method = min(methods_ok, key=lambda m: learner_nuisance_mse[m]['mse_outcome']). Selection is by outcome nuisance MSE, not p-value. |

| 5 | >= 5 method classes present | PASS | 6 learners (Lasso, ElasticNet, DecisionTree, Boosting, Forest, NeuralNet) + Ensemble + Best = 8 columns. |

| 6 | Nuisance R^2 reported and honestly interpreted | PASS | Paper Table 4 discusses negative average R^2 and reports Forest best R^2_Y=0.051, R^2_D=0.090. Diagnostics section acknowledges this is a small-sample limitation. |

| 7 | Score function appropriate | PASS | Default DoubleML PLR score (partialling out) is used, which is correct. |

| 8 | CIs from DML SEs (not naive OLS post-residualisation) | PASS | CIs are computed as median_coef +/- 1.96 * SE_adj using the B&N adjusted formula. |

| 9 | Lasso coefficient diagnostics reported | SUGGESTION (S2) | lasso_diagnostics: null for all specifications in the JSON. The extract_lasso_diagnostics function exists in the code but appears to have failed silently (likely a key-format issue across DoubleML versions). |

| 10 | BLP heterogeneity test reported before GATE | PASS | BLP beta_2 = 1.039, p < 0.001. Correctly reported before GATE analysis. |

| 11 | GATE groups based on predicted CATE quintiles | PASS | Code uses pd.qcut(S_proxy, q=5) on predicted CATE from the Best learner. |

| 12 | Jointly valid CIs for GATE | PASS | Code confirms gate_obj.confint(level=0.95, joint=True). |

| 13 | CLAN included | PASS | CLAN is reported in the paper (Table 5) and JSON (12 control variables compared). See E1 below for a labeling concern. |

Essential issues

E1. CLAN labeling inversion: “most affected” and “least affected” groups appear swapped.

The CLAN code defines top_q = S_proxy >= np.percentile(S_proxy, 80) – that is, the top quintile of the predicted CATE distribution. Since the overall treatment effect is negative (taxes reduce investment), a high predicted CATE means the country is least negatively affected (near-zero or positive CATE), while a low predicted CATE means the country is most negatively affected. However, the code labels the top-CATE group as “Most” (mapped to mean_most_affected in the JSON) and the bottom-CATE group as “Least” (mapped to mean_least_affected).

This means the paper’s CLAN discussion has the group labels inverted: when it states “the most affected countries have lower trade freedom (mean 6.72) than the least affected (mean 7.52),” it is actually describing the least harmed countries (Q5, positive CATE) as “most affected” and the most harmed countries (Q1, negative CATE) as “least affected.”

Scientific justification: This does not change any computed values (the t-test and means are correct), but it inverts the substantive interpretation of which country characteristics predict larger or smaller treatment effects. The CLAN paragraph in the paper draws a causal-intuition narrative (“countries with less open economies suffer more from corporate tax increases”) that is backwards relative to the actual GATE gradient. Without correction, readers will draw the wrong policy conclusion from the CLAN table.

Fix: Swap the labels so that bot_q (lowest predicted CATE = most negative effect = most harmed) is “most affected” and top_q (highest predicted CATE = least negative effect) is “least affected.” Update the CLAN discussion in the paper accordingly.

Suggestions

S1. Consider increasing n_rep from 5 to at least 20.

The current n_rep=5 is below the standard recommendation of n_rep >= 20 (Chernozhukov et al., 2018). The B&N adjusted SE formula is designed to account for cross-split variation, but with only 5 repetitions the median estimate may have non-trivial sampling noise. The cross-fit stability diagnostic (SD across reps = 0.016, ratio = 0.21 for Forest) is reassuring for the primary specification, so this is not blocking. However, increasing to n_rep=20 would better justify the asymptotic validity of the adjusted SE. This is a suggestion because the current results are stable and match the B&N benchmark, so there is no evidence of bias from low n_rep.

S2. Report Lasso variable selection diagnostics.

The lasso_diagnostics field is null for every specification, meaning Lasso/ElasticNet coefficient counts and top selected variables are not reported. The extract_lasso_diagnostics function exists but apparently fails due to a key-format mismatch across DoubleML versions. Reporting which controls Lasso retains (and which it zeros out) would help readers understand why DML recovers larger coefficients than OLS – the standard interpretation is that OLS is inefficient because it tries to estimate all 12 control coefficients, while Lasso shrinks irrelevant ones. This is informative but not essential.

S3. Discuss the “Best” learner selection criterion more transparently.

The “Best” learner is selected by lowest outcome nuisance MSE (mse_outcome), but the best method for the treatment nuisance can differ. For the primary specification (Investment ~ 5yr effective rate), Best selects Forest (R^2_Y = 0.051) for the outcome equation but the JSON records best_treatment_method: ElasticNet. The paper and diagnostics table do not explain this asymmetry. A brief note clarifying that “Best” refers to the learner with the lowest outcome MSE (and why this is the standard choice) would improve transparency.

S4. Note the DecisionTree anomaly in some specifications.

In Panel C (Business density ~ statutory), the Best learner is DecisionTree with R^2_Y = 0.115 and coefficient -0.037 (p = 0.70). In the same panel, Forest yields -0.089 (p = 0.11) and Lasso yields -0.115 (p = 0.04). DecisionTree is selected as “Best” on MSE grounds, but its coefficient is the smallest and least significant. This is not wrong – MSE-based selection is methodologically correct – but it highlights that “Best” does not always mean “most significant” or “most informative.” A sentence acknowledging this would prevent misunderstanding.

Round: 1 Overall verdict: Minor revision

Replication assessment

The replication is a clear success. All four reference specifications (Investment, FDI, Business density, Entry rate regressed on the five-year effective tax rate with 12 controls) replicate within a 0.69% maximum deviation, with exact sample-size matches (\(N = 61, 61, 60, 50\)). All signs are correct. The 4/4 pass rate against the Baiardi & Naghi (2024) benchmarks leaves no doubt about the fidelity of the data processing and regression implementation.

Essential issues

None — replication is adequate and the core DML results are well-supported.

Suggestions

Lasso p-value rounding in FDI panel (Panel B). The paper reports the FDI Lasso estimate as “\(-0.148\), \(p = 0.05\)”. The JSON records \(p = 0.053\), which strictly rounds to \(0.05\) only at one decimal place. Since the narrative frames Lasso as “significant at 5%,” the authors should either report the p-value to two decimal places (\(p = 0.05\), which is defensible) or explicitly note “marginally significant” to avoid any impression of cherry-picked rounding. This is cosmetic but worth fixing in one pass.

Nuisance \(R^2\) discussion could be tightened. The diagnostics table correctly reports the FAIL flag (average \(R^2_Y = -0.43\), \(R^2_D = -0.18\)) and the discussion paragraph provides a clear explanation: small-sample ML overfitting drives negative averages, while the best learner (Forest) achieves positive \(R^2\). This is transparent and adequate. A small improvement would be to note explicitly that the B&N adjusted SE formula already penalises unstable nuisance estimates, so the FAIL flag does not mechanically invalidate the confidence intervals. One sentence would suffice.

Neural network sign disagreement across panels. The paper correctly highlights that NeuralNet diverges in the primary specification (Investment panel: \(+0.063\), \(p = 0.61\)) and attributes this to small-sample instability. It is worth noting for completeness that the NeuralNet agrees on a negative sign in the other three panels (FDI: \(-0.113\); Business density: \(-0.048\); Entry rate: \(-0.191\), \(p < 0.01\)). This strengthens the argument that the Investment-panel disagreement is noise rather than a systematic concern. A brief parenthetical would suffice.

Forest plot inspection. The forest plot displays all four panels (A–D) with OLS, six individual learners, Ensemble, and Best for the five-year effective rate. Visual inspection confirms: (a) all DML point estimates in Panels A, B, and D are left of OLS, consistent with the narrative that DML recovers larger effects; (b) confidence intervals for tree-based methods exclude zero in Panel A, matching the reported significance; (c) the NeuralNet estimate in Panel A is the only point clearly to the right of zero; (d) Panel D shows the tightest clustering of learners, consistent with the claim that all six are significant. The plot is accurate and well-constructed. One minor visual note: the Ensemble and Best markers use a different colour (green) which aids readability, but no legend explains the colour coding — adding a legend entry would improve standalone interpretability.

Unified verdict: Minor revision

Contribution consensus

All three referees agree that this RECAST adds genuine value. The original Djankov et al. (2010) setting – cross-country OLS with a continuous treatment, 12 controls, and N approximately 60 – is exactly the regime where DML offers efficiency gains. The close match between the pipeline’s Forest estimate (-0.211) and the independent Baiardi & Naghi (2024) benchmark (-0.204) validates the implementation. The heterogeneous treatment effect analysis (BLP test, GATE, CLAN) extends the original paper in a direction neither Djankov et al. nor Baiardi & Naghi pursued, providing novel evidence that the tax-investment relationship varies systematically across countries. The contribution bar is met; the bar for blocking is correspondingly higher.

Essential issues (must be addressed — paper cannot stand as-is)

| # | Issue | Raised by | Scientific justification | Action |

|---|---|---|---|---|

| E1 | CLAN labeling inversion: the code maps top_q (highest predicted CATE = least negatively affected) to “most affected” and bot_q (lowest predicted CATE = most negatively affected) to “least affected,” inverting the group labels in the JSON output. |

R2 | The paper’s CLAN narrative draws a substantive conclusion (“countries with less open economies suffer more from corporate tax increases”) that may be backwards relative to the actual GATE gradient if the means are assigned to the wrong groups. Without correction, readers draw the wrong policy implication from the CLAN table. The paper text correctly defines Q1 as “most affected” and Q5 as “least affected” (line 229), but the underlying data feeding those means may be swapped in the code. | Fix the CLAN label assignment in code_build/04_dml_extension.ipynb so that bot_q (lowest CATE = most harmed) maps to “most affected” and top_q (highest CATE = least harmed) maps to “least affected.” Re-run the notebook and verify that the CLAN means in the JSON match the corrected labels. Update the paper text only if the reported means change. |

Suggestions (would improve but are optional)

| # | Issue | Raised by | Action |

|---|---|---|---|

| S1 | Acknowledge the selection-on-observables limitation in the conclusion. Neither OLS nor DML resolves potential endogeneity from omitted confounders. | R1 | Add one sentence to the conclusion noting that the DML extension strengthens efficiency and relaxes functional form but does not substitute for exogenous variation. |

| S2 | Tighten the nuisance R-squared discussion. (a) In the identification context, note that low R-squared in the treatment model m(X) implies substantial residual treatment variation, which supports the PLR model. (b) Note that the B&N adjusted SE formula already penalises unstable nuisance estimates, so the FAIL flag does not mechanically invalidate the CIs. | R1, R3 | Add one to two sentences to the diagnostics discussion combining both points. |

| S3 | Note that the GATE gradient is descriptive, not causal at the subgroup level. With N = 61 split into quintiles of ~12 observations, extreme-quintile estimates are imprecise. | R1 | Add a sentence to the GATE discussion noting the exploratory nature of the heterogeneity results. |

| S4 | Consider increasing n_rep from 5 to at least 20 for better asymptotic justification of the adjusted SE. | R2 | Optional. Current results are stable (cross-fit ratio = 0.21) and match the benchmark, so there is no evidence of bias. If computationally feasible, increase; otherwise, add a sentence justifying n_rep = 5 given the small sample. |

| S5 | Report Lasso variable selection diagnostics. The lasso_diagnostics field is null due to a likely key-format issue. |

R2 | Fix the extract_lasso_diagnostics function if possible. If not feasible, add a footnote noting the limitation. |

| S6 | Clarify the “Best” learner selection criterion. Best is selected by lowest outcome nuisance MSE, but the best treatment-model learner can differ. | R2 | Add a brief note in the methods section explaining that “Best” refers to the learner with the lowest outcome MSE and why this is the standard choice. |

| S7 | Note the DecisionTree anomaly in Panel C: Best selects DecisionTree on MSE grounds, but its coefficient is smallest and least significant. | R2 | Add one sentence acknowledging that MSE-based selection does not always select the most significant learner. |

| S8 | Report FDI Lasso p-value more precisely. JSON records p = 0.053; paper says p = 0.05. | R3 | Either report to two decimal places (p = 0.05) with a note, or say “marginally significant (p = 0.053).” |

| S9 | Note that NeuralNet agrees on a negative sign in three of four panels, strengthening the argument that the Investment-panel divergence is noise. | R3 | Add a parenthetical to the neural network discussion. |

| S10 | Add a legend entry to the forest plot explaining the green colour for Ensemble and Best markers. | R3 | Minor visual improvement to Figure 1. |

Blocking issues (require re-running a notebook)

| # | Issue | Raised by | Notebook to fix | Specific action |

|---|---|---|---|---|

| E1 | CLAN label inversion | R2 | code_build/04_dml_extension.ipynb |

Swap the mapping so bot_q (lowest CATE) = “most affected” and top_q (highest CATE) = “least affected.” Re-run the notebook, verify corrected JSON, and update paper text if the reported CLAN means change. |

Downgraded items

None. R2’s E1 is the only issue marked essential across all three reports, and it has clear scientific justification (inverted substantive interpretation). No items were downgraded.

Referee disagreements

None. All three referees reach the same verdict (minor revision) and agree on the core assessment: replication is successful, the DML extension is well-implemented, and the paper adds genuine value. No referee contradicts another’s findings.

Already resolved (suppressed from this round)

Not applicable — this is Round 1 with no prior changelogs.

RERUN_NEEDED: yes

Final Review Report

Paper: The Effect of Corporate Taxes on Investment and Entrepreneurship Original authors: Djankov, S., Ganser, T., McLiesh, C., Ramalho, R. and Shleifer, A. (American Economic Journal: Macroeconomics, 2010) Benchmark: Baiardi, A. and Naghi, A. A. (Econometrics Journal, 2024) Rounds completed: 1 of 3 Final verdict: Ready

The original Djankov et al. (2010) paper estimates the effect of corporate tax rates on investment and entrepreneurship using cross-country OLS with 12 controls and roughly 60 observations – a setting where p/N is around 0.2 and OLS is demonstrably inefficient. The DML extension relaxes the linear functional-form assumption on the nuisance functions, allows data-driven variable selection across the 12-dimensional control set, and uses cross-fitting to produce debiased treatment effect estimates. The central finding is that DML recovers larger and statistically significant negative effects where OLS was insignificant: for the primary specification (Investment ~ five-year effective rate), the DML Forest estimate is -0.211 (p = 0.008) compared to OLS -0.189 (p = 0.10). This closely matches the independent Baiardi and Naghi (2024) Forest benchmark of -0.204 (SE = 0.094). Beyond replication and DML, the heterogeneous treatment effect analysis – BLP test, GATE quintiles, and CLAN – is a genuine extension that neither the original paper nor Baiardi and Naghi pursued.

“Would I be pleased to have written this, flaws and all?” Yes. The replication is essentially exact, the DML extension is methodologically sound and closely matches an independent benchmark, and the HTE analysis adds novel descriptive evidence. The known limitations (small-sample nuisance R-squared, N ~ 60 GATE quintiles) are honestly disclosed and do not undermine the main conclusions.

| Specification | Published | Replicated | Delta (%) | N | Status |

|---|---|---|---|---|---|

| Investment ~ 5yr effective rate | -0.189 | -0.189 | 0.13% | 61 | PASS |

| FDI ~ 5yr effective rate | -0.095 | -0.095 | 0.35% | 61 | PASS |

| Business density ~ 5yr effective rate | -0.070 | -0.070 | 0.69% | 60 | PASS |

| Entry rate ~ 5yr effective rate | -0.133 | -0.133 | 0.23% | 50 | PASS |

Overall: 4/4 reference specifications replicated within the 5% threshold (worst deviation: 0.69%). All signs correct, all sample sizes match exactly. The replication also ran the full 4 x 3 = 12 OLS regression matrix (four outcomes by three tax measures). This is a clean replication.

Primary specification: Investment as % of GDP ~ Five-year effective tax rate (N = 61)

| Method | Coef | SE | 95% CI | p-value |

|---|---|---|---|---|

| OLS (original) | -0.189 | 0.116 | [-0.416, 0.038] | 0.10 |

| Forest (DML Best) | -0.211 | 0.079 | [-0.366, -0.056] | 0.008 |

| Boosting (DML) | -0.234 | 0.105 | [-0.440, -0.028] | 0.02 |

| ElasticNet (DML) | -0.201 | 0.091 | [-0.379, -0.017] | 0.03 |

| Lasso (DML) | -0.238 | 0.130 | [-0.493, 0.017] | 0.07 |

| Ensemble (DML) | -0.148 | 0.121 | [-0.385, 0.089] | 0.22 |

| B&N benchmark (Forest) | -0.204 | 0.094 | – | – |

Key finding: DML with Random Forest recovers a treatment effect 12% larger than OLS and achieves significance at the 1% level where OLS cannot reject zero. Five of six learners agree on a negative sign; the neural network diverges (+0.063, p = 0.61), which is expected small-sample noise and does not recur in the other three panels. The 3.4% difference between our Forest estimate (-0.211) and the Baiardi and Naghi benchmark (-0.204) validates the pipeline implementation.

| Issue | Raised (round) | Category | Resolved? | How |

|---|---|---|---|---|

| E1: CLAN label inversion (most/least affected groups swapped in code) | 1 (R2) | Essential | Yes | Swapped labels in notebook; re-ran pipeline; updated paper narrative and conclusion |

| S1: Selection-on-observables caveat in conclusion | 1 (R1) | Suggestion | Addressed | Two sentences added to conclusion |

| S2: Nuisance R-squared discussion (residual variation + B&N SE penalty) | 1 (R1, R3) | Suggestion | Addressed | Two sentences added to diagnostics discussion |

| S3: GATE gradient is descriptive, not causal | 1 (R1) | Suggestion | Addressed | Sentence added to GATE paragraph |

| S4: Increase n_rep from 5 to >= 20 | 1 (R2) | Suggestion | Deferred | Current results stable (cross-fit ratio = 0.21); matches benchmark |

| S5: Fix Lasso diagnostics extraction | 1 (R2) | Suggestion | Deferred | Pipeline-level fix; no impact on core estimates |

| S6: Clarify “Best” learner selection criterion | 1 (R2) | Suggestion | Addressed | Sentence added to results section |

| S7: DecisionTree anomaly in Panel C | 1 (R2) | Suggestion | Addressed | Sentence added to Panel C bullet |

| S8: FDI Lasso p-value precision (0.053 vs 0.05) | 1 (R3) | Suggestion | Addressed | Changed to “marginally significant at p = 0.053” |

| S9: NeuralNet cross-panel sign agreement | 1 (R3) | Suggestion | Addressed | Parenthetical added to primary specification paragraph |

| S10: Forest plot legend for green markers | 1 (R3) | Suggestion | Deferred | Visual improvement; no substantive impact |

One round was sufficient. The sole essential issue (CLAN label inversion) was corrected and the notebook was re-run. Seven of ten suggestions were addressed in the paper text; three were deferred for defensible reasons.

No essential issues remain. The paper is ready to share. The suggestions below would strengthen it further but are optional.

| # | Item | Category | Action needed |

|---|---|---|---|

| 1 | n_rep = 5 (below the recommended >= 20) | Suggestion | Increase to 20 if computationally feasible; current results are stable and match the benchmark, so this is not urgent |

| 2 | Lasso variable selection diagnostics are null | Suggestion | Fix the extract_lasso_diagnostics function at the pipeline level; add a footnote in the paper noting the limitation |

| 3 | Forest plot lacks legend entry for green Ensemble/Best markers | Suggestion | Add legend to Figure 1 for standalone interpretability |

Category: Essential = must fix before sharing. Suggestion = would improve, optional.

The RECAST confirms the original finding. Higher corporate taxes are associated with lower investment and entrepreneurship. DML strengthens this conclusion by recovering larger and statistically significant negative effects across all four outcomes, particularly for the primary specification where OLS was insignificant.

Identification is selection-on-observables throughout. Neither OLS nor DML resolves potential endogeneity from omitted confounders (e.g., political institutions that jointly determine tax policy and investment climate). The paper now states this explicitly in the conclusion. Readers should not interpret the DML results as stronger causal evidence than the original OLS – the gain is in efficiency and robustness to functional form, not in identification.

The nuisance model quality flag (FAIL) is a known small-sample limitation, not a fatal flaw. Average cross-validated R-squared is negative because most ML learners overfit with N ~ 60 and 12 controls. The best learner (Random Forest) achieves positive R-squared (0.051 for outcome, 0.090 for treatment), the cross-fit stability ratio is low (0.21), and the estimate matches the independent benchmark. The B&N adjusted SE formula already penalizes unstable nuisance estimation.

The heterogeneous treatment effect results are exploratory. With quintiles of approximately 12 observations each, the GATE gradient (-1.72 to +1.34) is descriptive rather than precisely estimated. The CLAN finding that trade openness correlates with larger negative effects is economically suggestive but should not be over-interpreted without a larger sample or additional identification strategy.

Manual check recommended before citing specific CLAN means. The CLAN labels were corrected in Round 1 (the original code had “most” and “least” affected swapped). The corrected JSON now shows no statistically significant CLAN differences at the 10% level for any control variable, including trade freedom (p = 0.21). The paper text reports means of 7.52 vs. 6.72 for trade freedom, but the corrected JSON records 7.38 vs. 6.87. This small discrepancy likely reflects the pre-correction vs. post-correction values; verify the paper’s CLAN numbers match the final

hte_results.jsonbefore treating them as definitive.

Comments to the authors

This is a well-executed RECAST. The replication is essentially exact (0.69% worst deviation, all sample sizes matching), and the DML extension provides a meaningful robustness check that strengthens the original paper’s conclusions. The close alignment with the independent Baiardi & Naghi (2024) Forest estimate (-0.211 vs. -0.204) is reassuring and suggests the pipeline is correctly implemented. The choice of PLR as the DML model is appropriate given that the original design uses OLS with a continuous treatment and no instruments.

The sign agreement across learners (5/6 negative) and the cross-fit stability (ratio = 0.21) both support the credibility of the main DML result. The neural network divergence is appropriately flagged and convincingly attributed to small-sample variance. The heterogeneous treatment effect analysis is a genuine contribution beyond what the original paper or Baiardi & Naghi (2024) provide, and the finding that trade openness moderates the tax-investment relationship is economically intuitive. Overall, the identification strategy is preserved and well-documented, and the paper should proceed with at most minor revisions.